As AI adoption increases globally, inference has become the largest consumer of electricity. This change reveals the need for a new strategy to manage how and where compute consumes energy in real time.

Aware of the new market demand and with over a decade experience in energy management for Data Centers, LōD Technologies announced the launch of CLōD, the world’s first compute flexibility platform designed specifically for AI inference workloads.

The system introduces a new paradigm for data center flexibility by aligning AI compute demand with real-time grid conditions.

“Electric grids rely on price signals to balance supply and demand,” said Medi Naseri, CEO of LōD Technologies. “CLōD extends that same mechanism to AI compute. When the grid sends a signal, workloads can respond automatically without disrupting applications or end users.”

Within just days of launch, CLōD has already processed billions of inference tokens and has attracted over 2000 developers to the platform, demonstrating immediate adoption of a new approach to managing AI workloads and energy consumption that translates into savings for Data Centers and final users.

While data center flexibility has been widely discussed across the energy and infrastructure sectors, most initiatives have remained limited to concept papers, pilot programs, or demand response experiments that require operators to throttle or curtail operations during grid events, affecting end users' experience. These approaches introduce operational risks and often conflict with service-level agreements (SLAs).

CLōD represents the first successful production implementation of flexibility for AI inference workloads, moving the concept beyond theoretical discussions and pilot programs into real-world operation.

Built on LōD’s patented energy-aware compute orchestration technology, CLōD represents the first successful production implementation of flexibility for AI inference workloads, moving the concept beyond theoretical discussions into real-world operation.

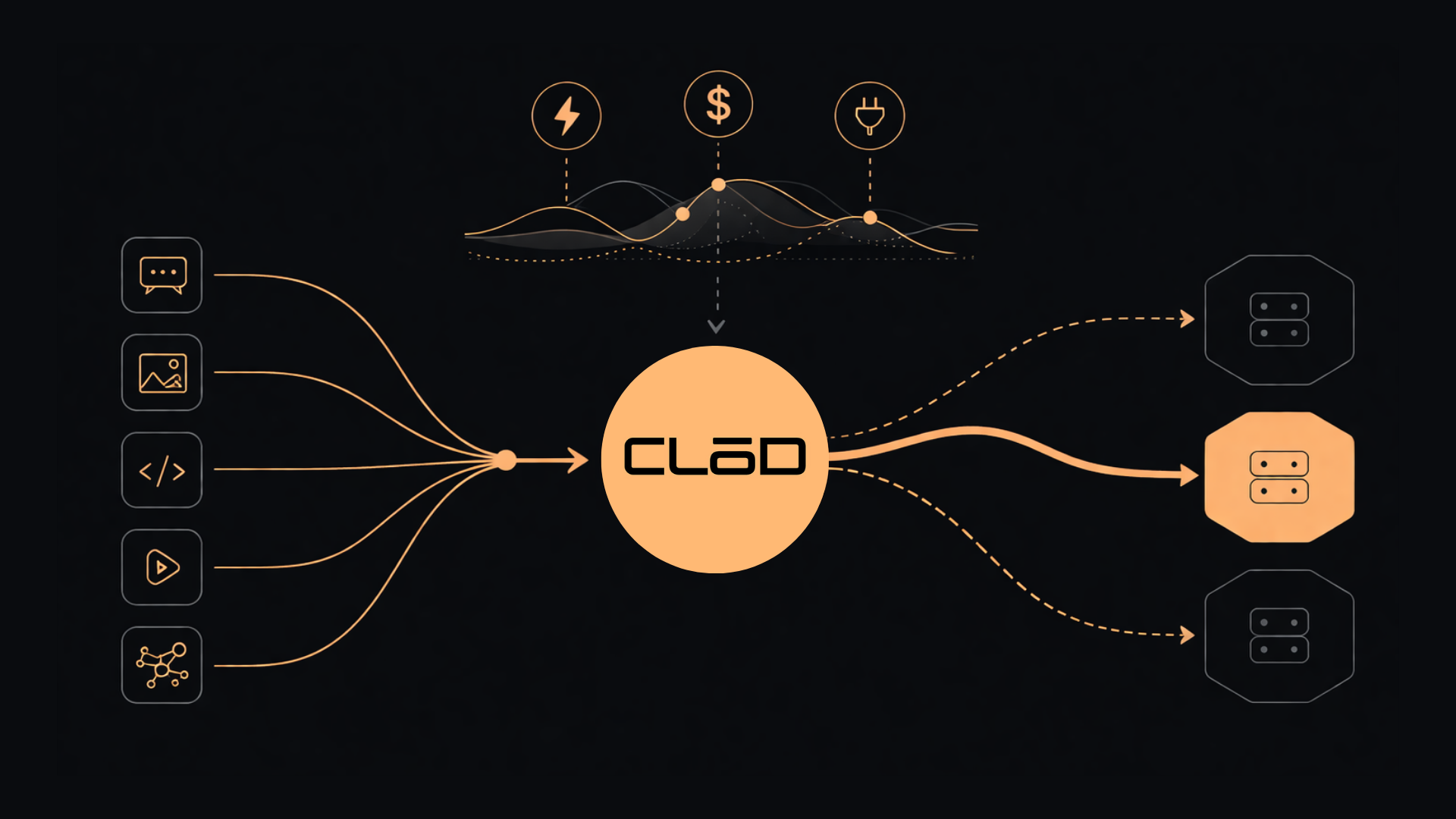

This platform encourages flexibility by responding to market signals at the application layer. It dynamically adjusts the pricing of inference tokens based on real-time electricity prices, grid conditions, and active demand response programs. This allows workloads to be routed to locations and times where energy is cheaper and more readily available.

Besides adding flexibility to Data Centers and supporting the grid, CLōD delivers discounts of up to 60% compared to market rates, encouraging end users to adopt flexible compute with zero integration required from data centers or cloud providers. Since flexibility is implemented at the routing and pricing layer, performance remains largely unaffected, with early deployments showing a maximum additional latency of roughly 50 milliseconds.

The launch of CLōD represents a major milestone in LōD’s broader vision of transforming computing into grid-interactive infrastructure. By aligning the economics of AI compute with the economics of electricity markets, the company believes large-scale computing infrastructure can become an asset to the grid rather than a source of stress.

To learn more, visit www.lod.io/ai-inference-solution

LōD Technologies builds energy-intelligent computing solutions that optimizes the flow of energy and workloads across data centers. Its platforms enable computing resources such as AI inference, AI training, and Bitcoin mining to operate as flexible loads that respond to real-time electricity market conditions. By integrating energy markets directly into compute orchestration, LōD helps operators reduce costs, lower emissions, and unlock new grid services while maintaining reliable computing performance.