Burçin Ünel is the research and policy director of the Institute for Policy Integrity at New York University School of Law. Anamika Dubey is a Huie-Rogers Endowed Chair associate professor of electrical engineering at Washington State University. Chiara Lo Prete is associate professor of energy economics at Pennsylvania State University.

A familiar debate is heating up about the need to build more gas pipelines.

During extreme cold weather, both electric- and gas-heating demand increases. This demand from gas-heating customers and gas-fired electric generators can drive up the price for gas and potentially strain the pipeline capacity to transport it. Add inadequate winterization, freezing equipment and failures at the electric distribution level to the mix, and we get the usual headlines of thousands of people without power.

So, the argument goes, we need more pipelines to survive extreme winter weather. And maybe we do. But as a group of researchers modeling how the gas and electric systems interact during disasters, we are here to tell you that "more steel" isn't the first answer.

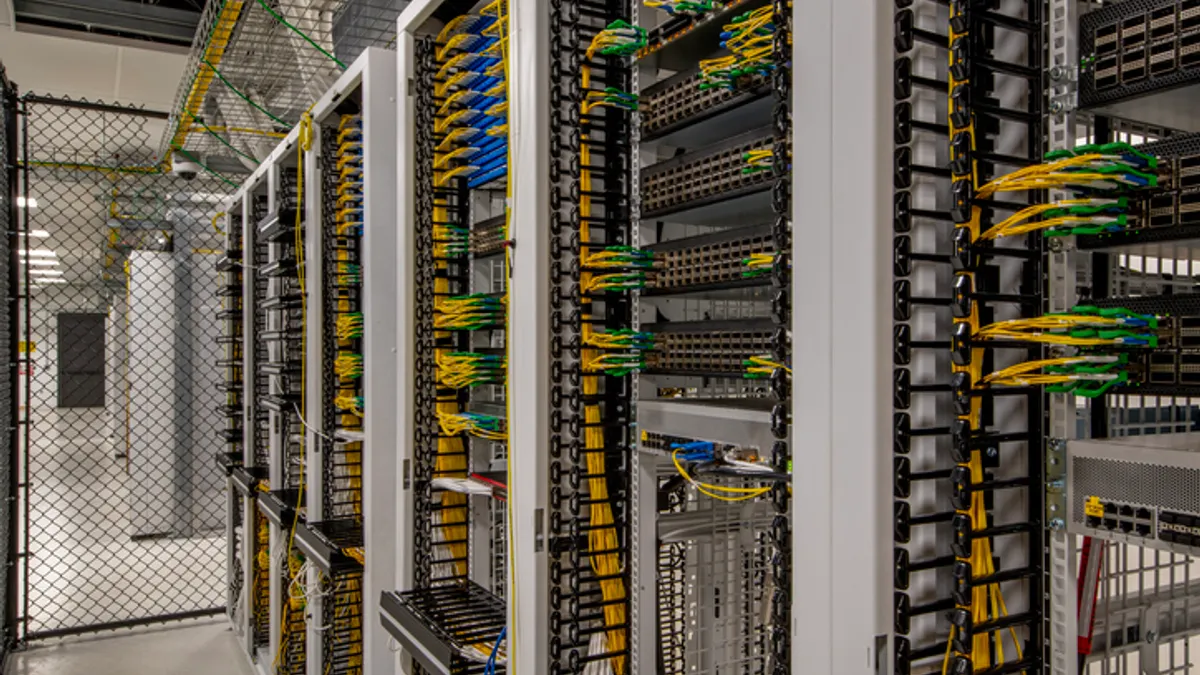

In fact, before we spend billions on infrastructure, we need to invest in something much cheaper: data.

It is possible to plan, utilize and strengthen our nation’s energy infrastructure to be more reliable and resilient in the face of extreme weather. But, right now, many of us who are working on the resilient grid of the future are effectively flying blind.

In our recent modeling work, we have been building an integrated gas-electric model to study different policy options to utilize existing infrastructure more effectively, incorporating predictions about how generators fail in extreme cold and how such failures affect grid reliability. What we found was that the biggest obstacle wasn’t a lack of engineering know-how or policy tools to address the design mismatches between gas and electric markets; it was a lack of basic information.

The Mystery of the Broken Generator

To better model the interactions between the gas and electric systems, utilize existing infrastructure more effectively and, if truly needed, build new infrastructure in a way that can minimize blackouts, we need to be able to simulate the future under extreme weather conditions. We need models that tell us, "If the temperature hits -10°F, these generators are likely to freeze up."

To calibrate those models, we look at past data from sources like the North American Electric Reliability Corp.’s Generating Availability Data System to see when things broke in the past. But that data itself is insufficient.

Currently, public data mostly just tells us when a generator failed. It’s like studying a car crash but knowing only the time of day it happened. We don't know where the generators were located, whether the generator was running at full power or sitting idle at the time, the specific local weather at that unit, or whether the pipeline serving the generator was congested or had remaining capacity.

Without knowing how a generator was operating before it failed, we can’t accurately predict why it failed. We are left guessing why one plant froze while another kept running. To ensure our grid is resilient, we need data on the "normal" days, not just the bad ones.

We are forced to make assumptions to fill in these gaps. But if you are planning for life-or-death weather events, assumptions are a scary foundation.

The ‘End User’ guessing game

For power-system modelers, finding and merging datasets on the electric side is tricky, but the gas side is like a detective novel with missing chapters.

To keep the lights on in winter, gas generators need natural gas. But they aren't the only ones in line — and might be last in line, depending on what service they can pay for. They share pipelines with factories, homes and businesses. To model resilience in a way that takes into account the human consequences of power outages, we need to know who is taking gas from the pipeline and when, and who buys from whom in the secondary markets.

Here is a data problem, among many: Pipeline operators’ data sets often lump different customers together under a generic label called "End User."

Is that "End User" a critical generator keeping a hospital running? Or is it a large factory making cardboard boxes? We often can't tell. We also can't easily see what’s happening "behind the city gate" of a local gas utility. We don’t know if the gas flowing into a city is going to residential furnaces, industrial boilers or gas generators. Some consumption and contract types are provided at the monthly level; some are not provided at any temporal or spatial resolution.

There is also no easy way of understanding the utility-specific incentives for releasing unused gas transportation capacity, which depend on local utilities’ individual contracts and are governed by state tariffs.

We are forced to make assumptions to fill in these gaps. And once again, assumptions are scary when you are planning for life-or-death weather events.

The bottom line

These are just a few examples, and don't even include additional complications about the gas nomination cycles or gas market structures. Some of the data we need exists, but is proprietary. Only pipeline or grid operators have other data. Some data exists only in rate-case dockets. State but not federal regulators have access to some data, and vice versa. And, of course, we need to be cognizant of the sensitivity of critical infrastructure data if and when it exists. But there are ways to address those concerns while also meaningfully aggregating and disclosing data.

The bottom line is that researchers, policymakers and regulators need more and better data access since we can’t manage what we can’t measure. Building energy infrastructure takes years, billions of dollars and massive political capital. Better data costs a fraction of that and can start tomorrow.

If we want to keep the heat on during the next big freeze, we need to stop guessing and start measuring.