Amanda Simonian is CMO at TerraFlow Energy, a Texas-based developer of long-duration storage infrastructure.

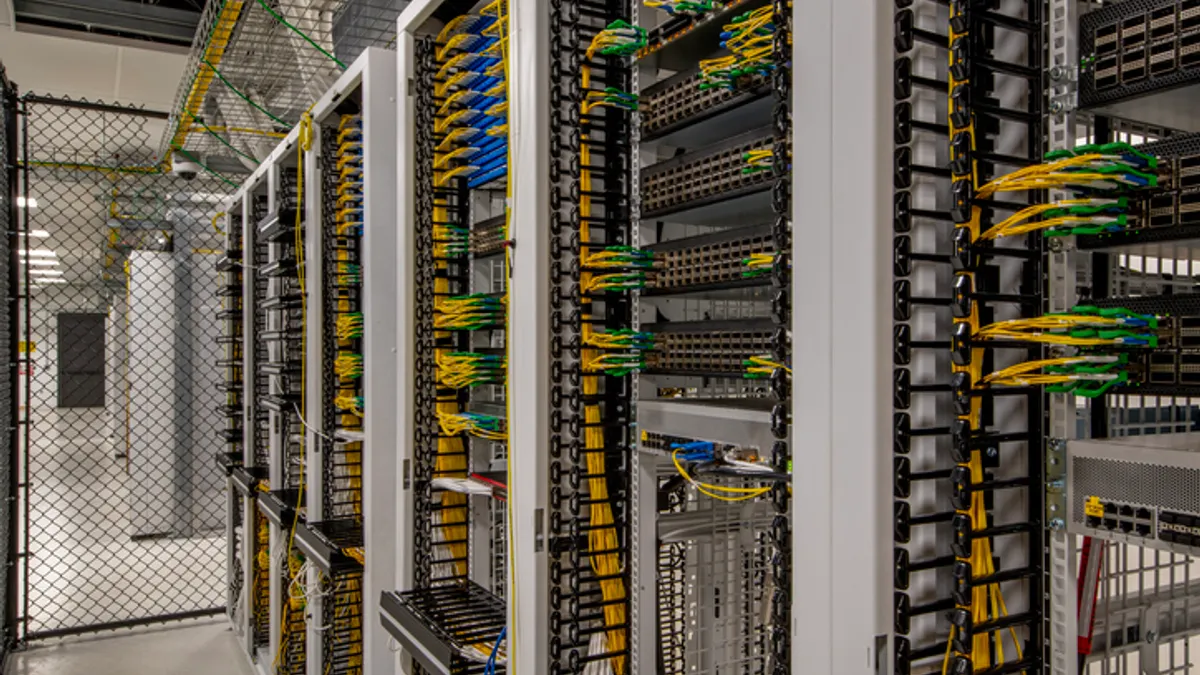

AI data centers are running into problems that no one wants to talk about publicly. Equipment is wearing out faster than expected. Backup systems are behaving unpredictably. Operators are being forced to troubleshoot issues that were never part of the plan. And a lot of people are quietly asking the same question: why is this happening so early, and so often?

The answer is simpler than it sounds. The power behavior inside modern AI campuses is completely different from what the industry is used to. These facilities aren’t drawing a steady load. They are swinging up and down in ways that stress every asset behind the meter and every feeder in front of it.

Most of the people dealing with these issues are doing it quietly. They share the patterns privately, in engineering reviews and late-night calls, but not in public forums. No one wants their site to be the first example. No one wants to admit a power system designed for normal data center behavior is struggling under AI behavior. But the truth is already known inside the groups that run, fix, or study these systems. If you are seeing failures at AI sites, you are not alone.

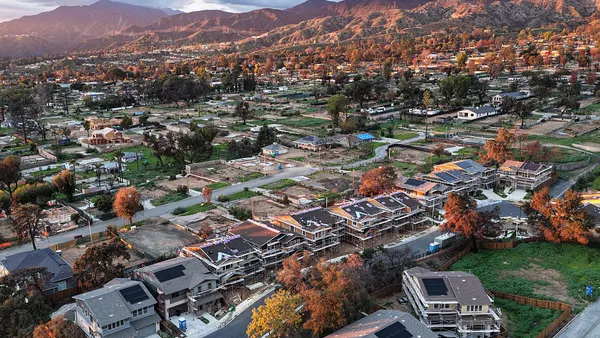

In a few cases, utilities have stepped in directly. A newly energized data center phase can be told to disconnect because the volatility is destabilizing a local feeder. Those conversations are happening. They are not theoretical, and they are not rare. They just aren’t public (yet).

The common thread is simple. These assets were never designed for constant, unpredictable power movement. AI created a new kind of load, and the industry tried to support it with the same tools it has always used. It is not surprising that those tools are showing stress.

If AI developers want to build responsibly, and if utilities want to maintain local stability, then the architecture has to change. A volatility buffer needs to sit between the data center and the grid. That buffer has to cycle deeply without penalty, respond instantly, run continuously, and do so without degrading or overheating. It needs hours, not minutes, of flexibility. And it needs to operate inside controlled environments, not sit exposed to temperature swings that test its limits.

Long-duration batteries are one example of technology that aligns well with this new operating reality.

They do not rely on tightly managed cell balancing or operate near thermal limits. They tolerate deep, frequent cycling and can sustain output at partial load for extended periods without accelerated degradation. When engineered as a dedicated stability layer, these systems absorb volatility at the source and reduce stress on both on-site equipment and the surrounding grid. The goal is not to oversize infrastructure, but to design intentionally for the power behavior AI actually produces. This is not a debate about which technology is better. It is a recognition that the world changed and the architecture did not. AI introduced a new class of load, and we tried to support it with tools built for a different era. It’s not surprising that those tools are showing stress.

So, let’s say it out loud. These discussions are already happening behind closed doors. Bringing them into the open is not about blame or alarm. It is about alignment. The load profile has changed. Our infrastructure design needs to change with it.